forked from akanazawa/hmr

-

Notifications

You must be signed in to change notification settings - Fork 52

Commit

This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository.

- Loading branch information

Showing

59 changed files

with

2,819 additions

and

347 deletions.

There are no files selected for viewing

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -1 +1,6 @@ | ||

| .DS_Store | ||

| models/ | ||

| logs*/ | ||

| *.json | ||

| *.DS_Store | ||

| *.pyc | ||

| forrelease.sh |

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -1,8 +1,72 @@ | ||

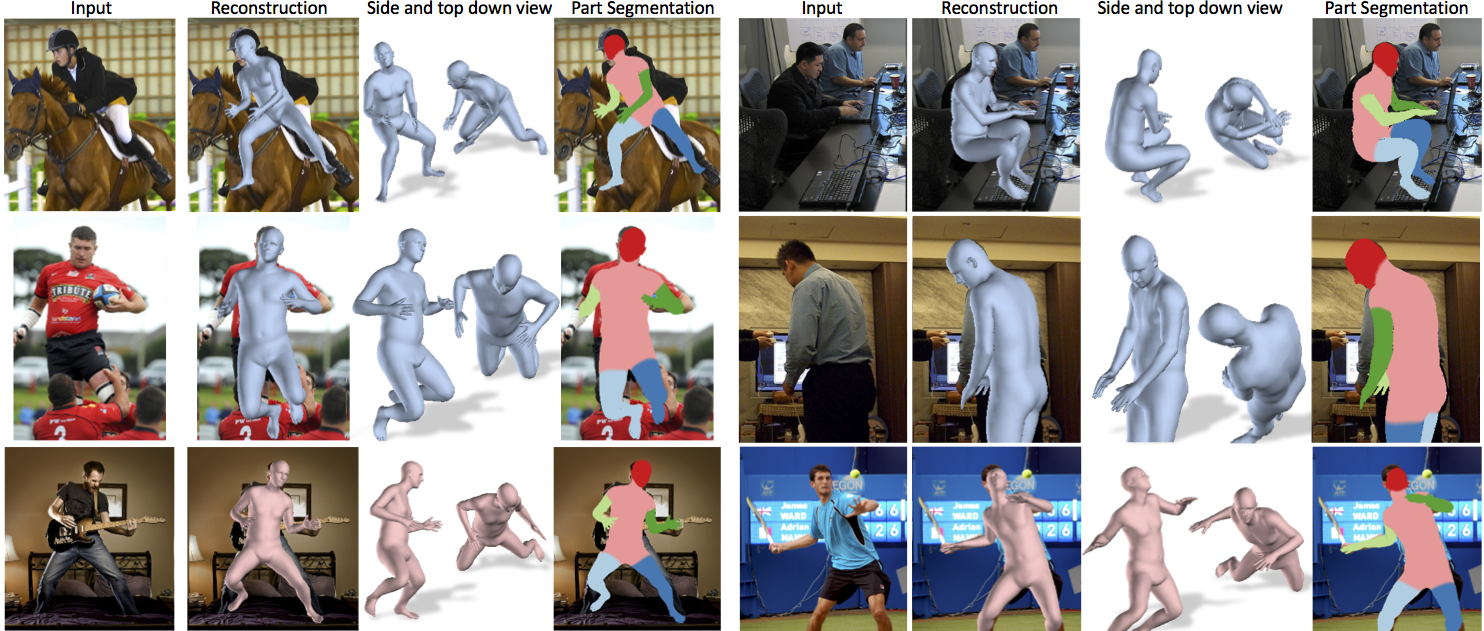

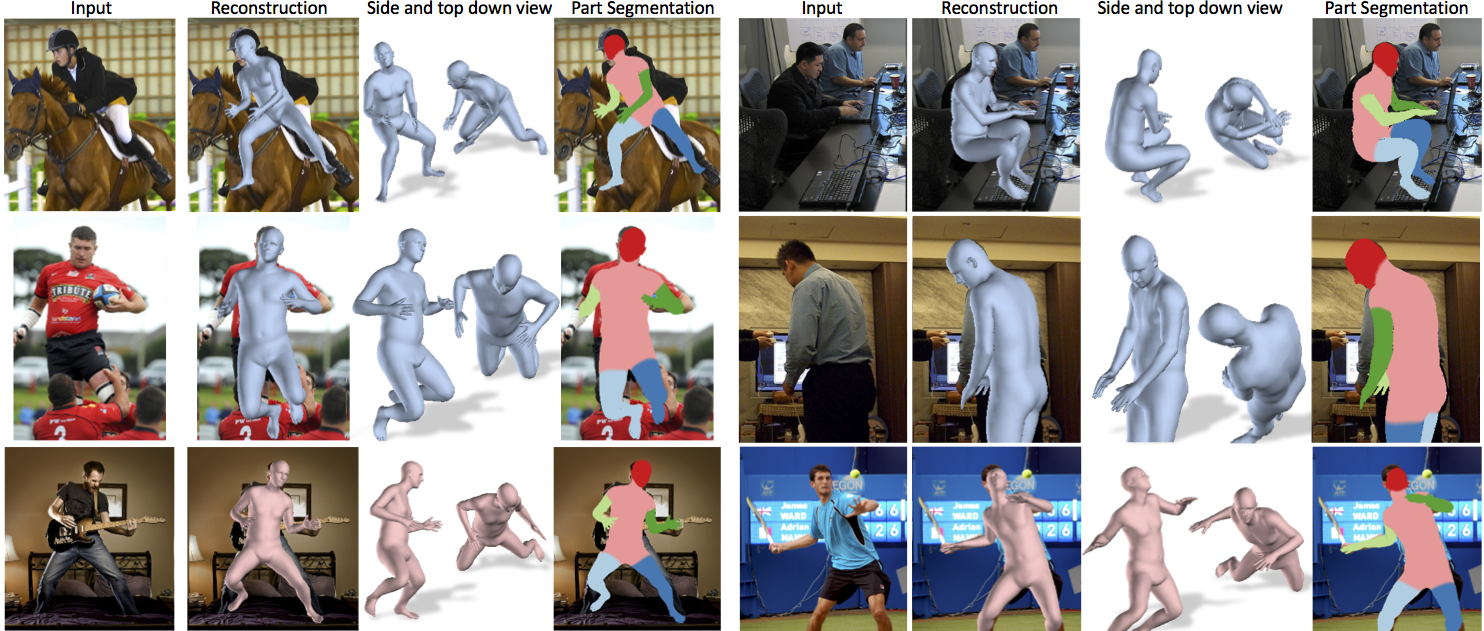

| # End-to-end Recovery of Human Shape and Pose | ||

|

|

||

| Angjoo Kanazawa, Michael J. Black, David W. Jacobs, Jitendra Malik | ||

| CVPR 2018 | ||

|

|

||

| [Project Page](https://akanazawa.github.io/hmr/) | ||

|  | ||

|

|

||

| ### Requirements | ||

| - Python 2.7 | ||

| - [TensorFlow](https://www.tensorflow.org/) tested on version 1.3 | ||

|

|

||

| ### Installation | ||

|

|

||

| #### Setup virtualenv | ||

| ``` | ||

| virtualenv venv_hmr | ||

| source venv_hmr/bin/activate | ||

| pip install -U pip | ||

| deactivate | ||

| source venv_hmr/bin/activate | ||

| pip install -r requirements.txt | ||

| ``` | ||

| #### Install TensorFlow | ||

| With GPU: | ||

| ``` | ||

| pip install tensorflow-gpu==1.3.0 | ||

| ``` | ||

| Without GPU: | ||

| ``` | ||

| pip install tensorflow==1.3.0 | ||

| ``` | ||

|

|

||

| ### Demo | ||

|

|

||

| 1. Download the pre-trained models | ||

| ``` | ||

| wget https://people.eecs.berkeley.edu/~kanazawa/cachedir/hmr/models.tar.gz && tar -xf models.tar.gz | ||

| ``` | ||

|

|

||

| 2. Run the demo | ||

| ``` | ||

| python -m demo --img_path data/coco1.png | ||

| python -m demo --img_path data/im1954.jpg | ||

| ``` | ||

|

|

||

| On images that are not tightly cropped, you can run | ||

| [openpose](https://github.com/CMU-Perceptual-Computing-Lab/openpose) and supply | ||

| its output json (run it with `--write_json` option). | ||

| When json_path is specified, the demo will compute the right scale and bbox center to run HMR: | ||

| ``` | ||

| python -m demo --img_path data/random.jpg --json_path data/random_keypoints.json | ||

| ``` | ||

| (The demo only runs on the most confident bounding box, see `src/util/openpose.py:get_bbox`) | ||

|

|

||

| ### Training code/data | ||

|

|

||

| Coming soon. | ||

|

|

||

| ### Citation | ||

| If you use this code for your research, please consider citing: | ||

| ``` | ||

| @inProceedings{kanazawaHMR18, | ||

| title={End-to-end Recovery of Human Shape and Pose}, | ||

| author = {Angjoo Kanazawa | ||

| and Michael J. Black | ||

| and David W. Jacobs | ||

| and Jitendra Malik}, | ||

| booktitle={Computer Vision and Pattern Regognition (CVPR)}, | ||

| year={2018} | ||

| } | ||

| ``` |

Empty file.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,135 @@ | ||

| """ | ||

| Demo of HMR. | ||

| Note that HMR requires the bounding box of the person in the image. The best performance is obtained when max length of the person in the image is roughly 150px. | ||

| When only the image path is supplied, it assumes that the image is centered on a person whose length is roughly 150px. | ||

| Alternatively, you can supply output of the openpose to figure out the bbox and the right scale factor. | ||

| Sample usage: | ||

| # On images on a tightly cropped image around the person | ||

| python -m demo --img_path data/im1963.jpg | ||

| python -m demo --img_path data/coco1.png | ||

| # On images, with openpose output | ||

| python -m demo --img_path data/random.jpg --json_path data/random_keypoints.json | ||

| """ | ||

| from __future__ import absolute_import | ||

| from __future__ import division | ||

| from __future__ import print_function | ||

|

|

||

| import sys | ||

| from absl import flags | ||

| import numpy as np | ||

|

|

||

| import skimage.io as io | ||

| import tensorflow as tf | ||

|

|

||

| from src.util import renderer as vis_util | ||

| from src.util import image as img_util | ||

| from src.util import openpose as op_util | ||

| import src.config | ||

| from src.RunModel import RunModel | ||

|

|

||

| flags.DEFINE_string('img_path', 'data/im1963.jpg', 'Image to run') | ||

| flags.DEFINE_string( | ||

| 'json_path', None, | ||

| 'If specified, uses the openpose output to crop the image.') | ||

|

|

||

|

|

||

| def visualize(img, proc_param, joints, verts, cam): | ||

| """ | ||

| Renders the result in original image coordinate frame. | ||

| """ | ||

| cam_for_render, vert_shifted, joints_orig = vis_util.get_original( | ||

| proc_param, verts, cam, joints, img_size=img.shape[:2]) | ||

|

|

||

| # Render results | ||

| skel_img = vis_util.draw_skeleton(img, joints_orig) | ||

| rend_img_overlay = renderer( | ||

| vert_shifted, cam=cam_for_render, img=img, do_alpha=True) | ||

| rend_img = renderer( | ||

| vert_shifted, cam=cam_for_render, img_size=img.shape[:2]) | ||

| rend_img_vp1 = renderer.rotated( | ||

| vert_shifted, 60, cam=cam_for_render, img_size=img.shape[:2]) | ||

| rend_img_vp2 = renderer.rotated( | ||

| vert_shifted, -60, cam=cam_for_render, img_size=img.shape[:2]) | ||

|

|

||

| import matplotlib.pyplot as plt | ||

| # plt.ion() | ||

| plt.figure(1) | ||

| plt.clf() | ||

| plt.subplot(231) | ||

| plt.imshow(img) | ||

| plt.title('input') | ||

| plt.axis('off') | ||

| plt.subplot(232) | ||

| plt.imshow(skel_img) | ||

| plt.title('joint projection') | ||

| plt.axis('off') | ||

| plt.subplot(233) | ||

| plt.imshow(rend_img_overlay) | ||

| plt.title('3D Mesh overlay') | ||

| plt.axis('off') | ||

| plt.subplot(234) | ||

| plt.imshow(rend_img) | ||

| plt.title('3D mesh') | ||

| plt.axis('off') | ||

| plt.subplot(235) | ||

| plt.imshow(rend_img_vp1) | ||

| plt.title('diff vp') | ||

| plt.axis('off') | ||

| plt.subplot(236) | ||

| plt.imshow(rend_img_vp2) | ||

| plt.title('diff vp') | ||

| plt.axis('off') | ||

| plt.draw() | ||

| plt.show() | ||

| # import ipdb | ||

| # ipdb.set_trace() | ||

|

|

||

|

|

||

| def preprocess_image(img_path, json_path=None): | ||

| img = io.imread(img_path) | ||

|

|

||

| if json_path is None: | ||

| scale = 1. | ||

| center = np.round(np.array(img.shape[:2]) / 2).astype(int) | ||

| # image center in (x,y) | ||

| center = center[::-1] | ||

| else: | ||

| scale, center = op_util.get_bbox(json_path) | ||

|

|

||

| crop, proc_param = img_util.scale_and_crop(img, scale, center, | ||

| config.img_size) | ||

|

|

||

| # Normalize image to [-1, 1] | ||

| crop = 2 * ((crop / 255.) - 0.5) | ||

|

|

||

| return crop, proc_param, img | ||

|

|

||

|

|

||

| def main(img_path, json_path=None): | ||

| sess = tf.Session() | ||

| model = RunModel(config, sess=sess) | ||

|

|

||

| input_img, proc_param, img = preprocess_image(img_path, json_path) | ||

| # Add batch dimension: 1 x D x D x 3 | ||

| input_img = np.expand_dims(input_img, 0) | ||

|

|

||

| joints, verts, cams, joints3d, theta = model.predict( | ||

| input_img, get_theta=True) | ||

|

|

||

| visualize(img, proc_param, joints[0], verts[0], cams[0]) | ||

|

|

||

|

|

||

| if __name__ == '__main__': | ||

| config = flags.FLAGS | ||

| config(sys.argv) | ||

|

|

||

| config.batch_size = 1 | ||

|

|

||

| renderer = vis_util.SMPLRenderer(face_path=config.smpl_face_path) | ||

|

|

||

| main(config.img_path, config.json_path) |

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,5 @@ | ||

| models/ | ||

| logs*/ | ||

| *.json | ||

| *.DS_Store | ||

| *.pyc |

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,72 @@ | ||

| # End-to-end Recovery of Human Shape and Pose | ||

|

|

||

| Angjoo Kanazawa, Michael J. Black, David W. Jacobs, Jitendra Malik | ||

| CVPR 2018 | ||

|

|

||

| [Project Page](https://akanazawa.github.io/hmr/) | ||

|  | ||

|

|

||

| ### Requirements | ||

| - Python 2.7 | ||

| - [TensorFlow](https://www.tensorflow.org/) tested on version 1.3 | ||

|

|

||

| ### Installation | ||

|

|

||

| #### Setup virtualenv | ||

| ``` | ||

| virtualenv venv_hmr | ||

| source venv_hmr/bin/activate | ||

| pip install -U pip | ||

| deactivate | ||

| source venv_hmr/bin/activate | ||

| pip install -r requirements.txt | ||

| ``` | ||

| #### Install TensorFlow | ||

| With GPU: | ||

| ``` | ||

| pip install tensorflow-gpu==1.3.0 | ||

| ``` | ||

| Without GPU: | ||

| ``` | ||

| pip install tensorflow==1.3.0 | ||

| ``` | ||

|

|

||

| ### Demo | ||

|

|

||

| 1. Download the pre-trained models | ||

| ``` | ||

| wget https://people.eecs.berkeley.edu/~kanazawa/cachedir/hmr/models.tar.gz && tar -xf models.tar.gz | ||

| ``` | ||

|

|

||

| 2. Run the demo | ||

| ``` | ||

| python -m demo --img_path data/coco1.png | ||

| python -m demo --img_path data/im1954.jpg | ||

| ``` | ||

|

|

||

| On images that are not tightly cropped, you can run | ||

| [openpose](https://github.com/CMU-Perceptual-Computing-Lab/openpose) and supply | ||

| its output json (run it with `--write_json` option). | ||

| When json_path is specified, the demo will compute the right scale and bbox center to run HMR: | ||

| ``` | ||

| python -m demo --img_path data/random.jpg --json_path data/random_keypoints.json | ||

| ``` | ||

| (The demo only runs on the most confident bounding box, see `src/util/openpose.py:get_bbox`) | ||

|

|

||

| ### Training code/data | ||

|

|

||

| Coming soon. | ||

|

|

||

| ### Citation | ||

| If you use this code for your research, please consider citing: | ||

| ``` | ||

| @inProceedings{kanazawaHMR18, | ||

| title={End-to-end Recovery of Human Shape and Pose}, | ||

| author = {Angjoo Kanazawa | ||

| and Michael J. Black | ||

| and David W. Jacobs | ||

| and Jitendra Malik}, | ||

| booktitle={Computer Vision and Pattern Regognition (CVPR)}, | ||

| year={2018} | ||

| } | ||

| ``` |

Empty file.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Loading

Sorry, something went wrong. Reload?

Sorry, we cannot display this file.

Sorry, this file is invalid so it cannot be displayed.

Oops, something went wrong.